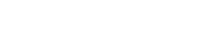

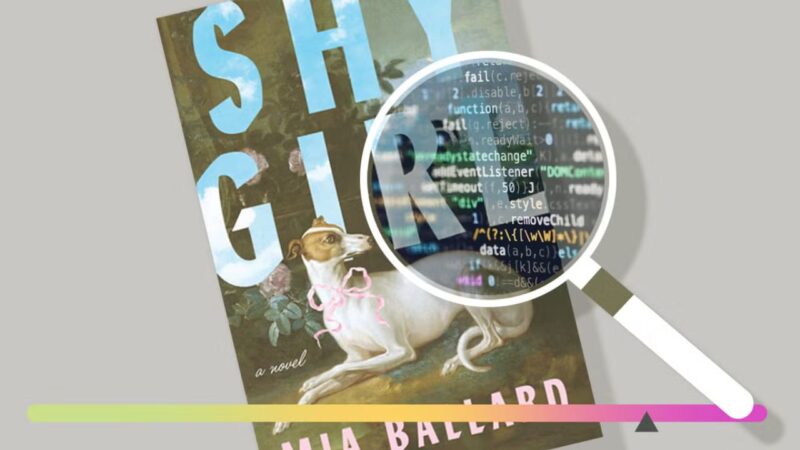

There is a particular kind of silence that follows a public execution. Not a violent one — no blood, no courtroom drama — just the quiet, efficient cancellation of a person’s career, carried out in the span of a single news cycle. That is what happened to Mia Ballard in March of 2026, and the story has not stopped reverberating since.

Her horror novel Shy Girl — a dark, disturbing descent into obsession and captivity — went from grassroots TikTok sensation to publishing industry cautionary tale in under 48 hours. The weapon? A single number: 78 percent.

But before we unpack that number, we need to understand what it destroyed — and whether it deserved to destroy anything at all.

From Amazon to Hachette: A Self-Published Dream

In February 2025, Mia Ballard did something millions of aspiring authors dream of and relatively few actually do. She published her own book. No agent. No publishing deal. No safety net. Just Shy Girl — a “femgore revenge” horror novel centered on a young woman with severe OCD who agrees to live as a wealthy man’s human pet in exchange for financial relief — uploaded to Amazon with her name on the cover.

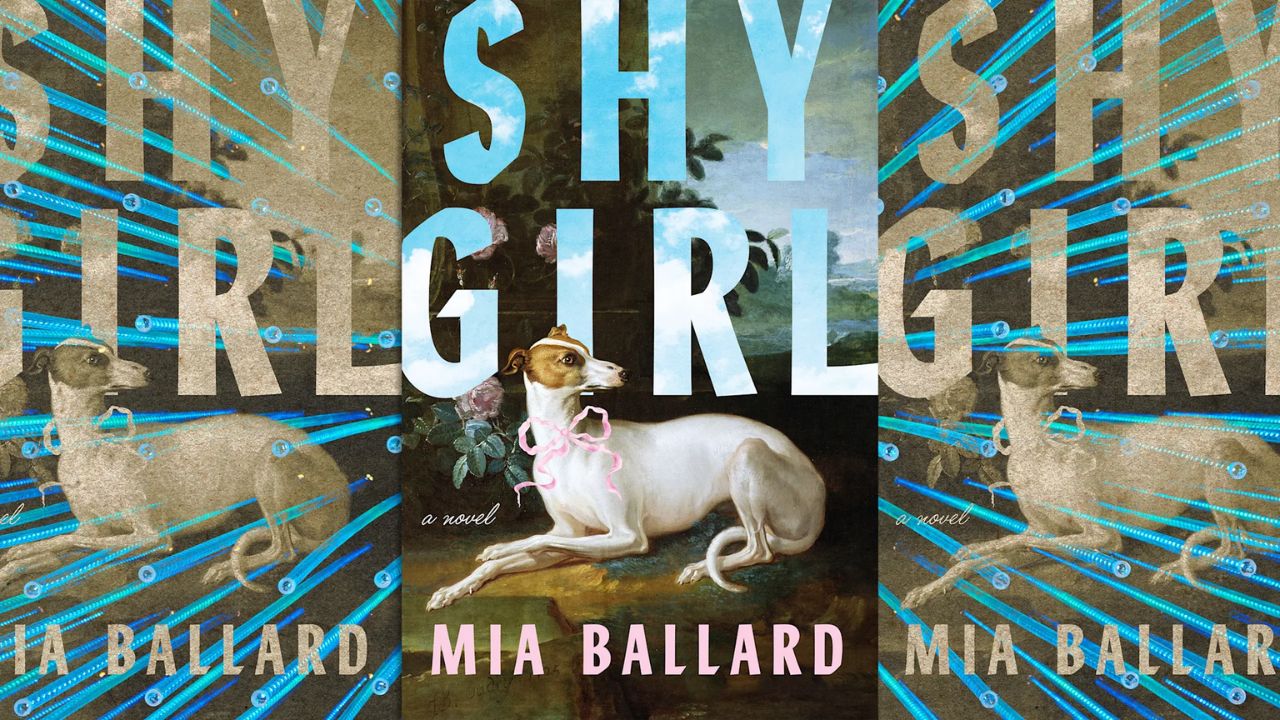

The horror community, always hungry for something genuinely unsettling, found it on TikTok. Word spread in the organic, unstoppable way that grassroots literary success occasionally still happens. Nearly five thousand readers rated it on Goodreads. Reviewers called it corrosive but addictive, dark and visceral. Its score settled at a respectable 3.5 out of 5 stars — not a literary triumph, perhaps, but unambiguous evidence that real human beings were reading it, feeling something, and recommending it to others.

Then Hachette came calling. One of the five largest publishers in the world — the “Big Five” that controls the lion’s share of English-language commercial fiction — acquired the novel. They assigned an editor. Designed new covers. Scheduled a UK release for November 2025 and a US release the following May through their Orbit imprint. Sent advance copies to established reviewers. Built an entire marketing campaign around a book that had started as one woman’s solo bet on herself.

For a few months, Shy Girl existed in that golden liminal space: a self-published underdog turned traditionally published author. The kind of story publishers love to champion.

Then the internet got hold of it.

The Mob Assembles

The accusations did not arrive all at once. They accumulated, layer by layer, in the way internet pile-ons always do.

It started with a Reddit post in January 2026 — a user claiming to be a veteran book editor, dissecting the novel’s prose and cataloguing what they identified as hallmarks of AI-generated writing. The post went viral within the book community. Then came a nearly three-hour-long YouTube video by a creator called frankie’s shelf, titled with characteristic bluntness: “i’m pretty sure this book is ai slop.” It accumulated 1.2 million views.

The video pointed to specific textual patterns: the word sharp appearing with unusual frequency, excessive “rule of three” sentence constructions, descriptions that seemed to multiply and repeat as if padding out a word count, dialogue that moved in odd, slightly mechanical rhythms. None of these is, on its own, conclusive. Writers develop tics. Genre conventions repeat. A tired author revising at 2 a.m. can produce sentences that read strangely. But stacked together, they made for a compelling, if circumstantial, case.

The internet had made up its mind. All that remained was for someone to provide a number.

Enter Pangram: The 78% That Changed Everything

That number came from Pangram, an AI detection software company whose CEO, Max Spero, scanned a copy of Shy Girl and publicly posted the results on X. His tool’s verdict: 78 percent AI-generated.

The New York Times picked up the story. Within 24 hours of the article’s publication, Hachette canceled the book, pulled it from every retailer, and issued a statement about their “enduring commitment to protecting original creative expression and storytelling.” Ballard, who had been sending panicked emails late on a Thursday night, was suddenly a disgraced author. The first time a Big Five publisher had ever pulled a commercially published book over AI allegations.

The 78 percent figure became the story. It was repeated in every outlet that covered the scandal. It had the clean authority of a scientific finding — a decimal point away from certainty. It felt like proof.

But was it?

The Pirated Copy Problem: A Scandal Within the Scandal

Here is where the story takes a turn that should have made every headline, and didn’t.

When Pangram’s CEO posted his scan results publicly on X, the report contained something that should have raised immediate alarm: embedded throughout the flagged text, appearing over and over, was a URL — OceanofPDF.com. If you are not familiar with it, OceanofPDF is a well-known piracy website that illegally distributes copyrighted books as downloadable PDFs.

This means that the document Pangram used to produce its damning 78 percent figure was almost certainly not the original manuscript, and not the professionally edited Hachette edition. It appears, based on everything publicly available, to have been a pirated copy scraped from an illegal file-sharing site.

Think about what that means for a moment. AI detection tools work by analyzing text. But pirated PDFs are not clean documents — they are often processed through OCR (optical character recognition) software, which introduces conversion errors, formatting anomalies, irregular spacing, and corrupted characters. Any of these artifacts could interfere with how a detection algorithm reads the text, producing results that don’t accurately reflect the original work.

The 78 percent figure that ended Mia Ballard’s career may have been generated from a document that was already compromised before a single algorithm looked at it.

The New York Times, which had linked to Spero’s post in their own article, apparently did not look closely enough at the preview to notice. Neither, it seems, did anyone at Hachette before they made the decision to pull the book.

The Conflict of Interest Nobody Wanted to See

The pirated copy issue would be damning enough on its own. But writer Andrea Drey, whose deep-dive piece “The Shy Girl AI Scandal Is Way Worse Than You Think” on Substack became essential reading on the subject, uncovered something else: the chain of events leading to the New York Times story was not as organic as it appeared.

The journalist who brought the story to the Times had learned about Shy Girl from Asia Laird — described in his own account as “recently hired at Pangram.” What he did not mention, and what a quick search of LinkedIn and ZoomInfo confirmed, is that Laird’s title at Pangram was Founding Account Executive in Sales and Business Development. Her literal job was to sell Pangram’s product.

So the person who surfaced the story to the journalist who brought it to the New York Times was a sales employee at the company whose detection tool produced the key evidence. The same tool that generated the 78 percent number. The same number that informed the decision to cancel Ballard’s contract.

This is not an allegation of conspiracy. It may well be that everyone involved acted in good faith. But it is a spectacular conflict of interest — and not a single major outlet that covered the story mentioned it.

The journalist himself, Drey notes, had a pre-existing professional relationship with Pangram’s CEO. His consulting firm worked with publishers and with the tech companies that sell products to publishers. And in his own accounting of his role in the affair, he simultaneously argued that detection reports should be used “only for guidance, not as proof of guilt” and that there must be “an underlying presumption of innocence” — principles that apparently did not apply when his own evidence was being used to end Ballard’s career without any such presumption.

How Reliable Is AI Detection, Really?

The 78 percent figure was presented, and largely received, as though it were a measurement of a fixed, objective fact. It is not.

AI detection tools work by analyzing statistical patterns in text — primarily metrics called perplexity (how predictable a piece of writing is, word by word) and burstiness (how much sentence length varies). Human writing tends to be “bursty” — mixing short punchy sentences with longer, more complex ones. AI writing tends to be more uniform. Detection tools use these signals, along with machine learning classifiers trained on large datasets of human and AI text, to return a probability estimate.

The key word is estimate.

Multiple independent studies have found that no AI detector achieves 100 percent reliability. A study published in the International Journal for Educational Integrity in 2026 documented “inconsistent accuracy, high false-positive rates, and documented biases against non-native writers” across current detection tools. A 2025 Chicago Booth Review study concluded that AI detectors “vary widely in reliability and are unsuitable as standalone tools for decisions.” Even the best-performing tools in independent testing — Originality AI and Copyleaks — struggle significantly with paraphrased content and produce false positives at rates of between 10 and 30 percent on certain types of writing, particularly from non-native English speakers.

Researchers have also found that simple stylistic repetition, unusual genre conventions, or even a writer’s natural cadence can trigger false positives. A writer who favors certain sentence structures, who uses a small set of adjectives with deliberate intensity, or who writes in a genre with established formal patterns — horror, for instance, with its rhythmic dread and obsessive circling back — could easily score high on an AI detection report without ever having touched a chatbot.

None of this is to say that Ballard definitely did not use AI. She may have. Her own statement to the Times acknowledged that an editor she had hired to revise the self-published version had used AI tools, though she insisted she had not done so herself. The question of guilt or innocence is, in many ways, beside the point.

The point is: a probabilistic score from a tool trained on scraped data, applied to what appears to have been a pirated copy of a book, became the sole evidence used to destroy a woman’s career in under 24 hours. No human read the manuscript carefully and compared it to Ballard’s other writing. No linguistic expert was consulted. No version history was examined. No fair process was followed.

The Speed of Cancellation

Hachette’s decision to pull the book the same day the New York Times article ran is, in retrospect, one of the most troubling aspects of the whole affair.

Hachette had acquired this book. Their editorial and legal teams had reviewed it. They had invested in production, covers, marketing, and distribution. They had the manuscript, the edit history, the contracts. If anyone was positioned to conduct a real, careful, internal investigation of the AI allegations, it was them.

Instead, they canceled within 24 hours.

Their spokeswoman noted that Hachette “requires all submissions to be original to the authors and that the authors disclose whether AI is used during the writing process.” That is a reasonable policy. But publishing a brief statement about policy principles is not the same as investigating whether a specific book violated them. A publisher that positions itself as a fierce defender of creative rights should, at minimum, have read its own book before deciding its author had betrayed those rights.

It’s also worth noting what Hachette had already known before the AI controversy ever surfaced: Ballard’s original self-published cover had used an image taken from Pinterest without the permission of the artist, British painter Whyn Lewis. The artistic theft was public knowledge. Hachette’s response had been to commission new covers that, by multiple accounts, carefully recreated the emotional mood of Lewis’s original painting — without, as far as anyone can tell, contacting Lewis herself or compensating her for the work that inspired the new designs.

A publisher willing to work around a documented case of artistic theft apparently drew a hard line at a probabilistic AI detection score. The inconsistency is striking.

The Racialized Dimension

The internet’s treatment of Ballard herself added another layer that several commentators have rightly raised.

Ballard, a self-identified woman of color, faced a volume and intensity of online vitriol that stands in stark contrast to how other authors — overwhelmingly white and male — have been treated in similar or worse circumstances. Authors like James Frey, who openly admitted in a Vanity Fair interview to using AI in his work, faced no meaningful industry consequences. A publishing landscape in which, by one count, approximately 95 percent of fiction published between 1950 and 2018 was authored by white writers is not a neutral background against which to evaluate who gets the benefit of the doubt.

Ballard herself noted the asymmetry: “My name is ruined for something I didn’t even personally do.” Whether or not you believe her claim of innocence, the speed and totality of her cancellation — without a real investigation, on the basis of contested evidence from a possibly compromised source, surfaced by a company with a financial interest in the story — deserves more scrutiny than it has received.

What This Means for Every Author

The Shy Girl scandal is not just one author’s misfortune. It is a precedent. The first time a Big Five publisher has ever pulled a book over AI allegations, it set a template for how every future accusation will be handled.

And the template is: one number, one day, no process.

That should frighten every writer. Not because authors should be free to pass off AI-generated text as their own — they shouldn’t. But because the tools being used to catch them are not reliable enough to carry that kind of responsibility. Because the incentive structures around those tools are not clean. Because the internet will sometimes be right about things, but the speed of internet justice has never been matched by the care of internet evidence-gathering.

A writer who favors unusual repetition as a stylistic device, who writes in a genre with formal conventions that mimic certain AI patterns, who hired an editor who cut corners — that writer is now one viral YouTube video away from their career ending, with no due process, no appeal, and no one at a major institution willing to slow down long enough to ask if the accusation is actually true.

The Question Nobody Is Asking

In the weeks since the scandal broke, the conversation has largely revolved around the same axis: did she or didn’t she? It is the most human of questions, and also the least useful one.

The more important questions are these:

Who gets to decide? A company whose revenue depends on publishing industry anxiety about AI? A YouTube creator with 1.2 million views and a compelling narrative? A journalist with a pre-existing relationship with the CEO of an AI detection firm?

What counts as evidence? A probabilistic score from a tool that may have been applied to a pirated document? Stylistic pattern-matching that can’t distinguish a deliberate artistic choice from a language model’s output? A Reddit thread from an anonymous commenter claiming relevant expertise?

What process does a person deserve before losing their livelihood? One day? A week? The same careful consideration that publishers apply to their other editorial decisions — which is to say, months of work by multiple professionals?

And who pays the price when the tools are wrong? Not the detection company. Not the publisher. Not the journalist. Not the YouTube creator. Just the person whose name is on the book.

What Comes Next

The future of AI in publishing is genuinely uncertain, and the Shy Girl case will not settle anything — except, perhaps, to demonstrate how badly the industry needs proper frameworks before it starts making more of these decisions.

Detection tools will get better. They will also get worse in specific, unexpected ways as AI writing evolves and hybridizes with human writing in increasingly complex workflows. The line between “AI-assisted” and “AI-generated” will become progressively harder to locate. Publishers will face mounting pressure to take positions, and those positions will be shaped as much by public relations as by principle.

What the Shy Girl scandal should have produced — and hasn’t yet — is a serious industry conversation about burden of proof. About what standards of evidence are appropriate before a publisher cancels a contract. About whether a detection score alone can ever justify ending an author’s career. About the conflicts of interest embedded in a system where the companies selling detection tools are also the companies surfacing stories to journalists.

Instead, we got a number. 78 percent. Repeated across hundreds of outlets, unexamined, uncontested, carrying all the authority of hard data and none of its actual requirements.

A Final Word on Mia Ballard

Whatever Mia Ballard did or didn’t do — whatever role AI played in the writing of Shy Girl, whatever decisions she made in self-publishing a book that may or may not have been wholly her own — she was failed by every institution that had the power to handle her story responsibly.

She was failed by a detection company that posted its results publicly before anyone had checked the integrity of the source document. By a journalist who did not disclose the professional relationships that brought the story to him. By a publisher that canceled her contract in the time it takes most institutions to schedule a meeting. By a media ecosystem that repeated a contested number until it felt like fact.

“This controversy has changed my life in many ways,” Ballard wrote in her statement. “My mental health is at an all-time low. And my name is ruined for something I didn’t even personally do.”

Maybe she used AI. Maybe she didn’t. Maybe the truth is somewhere in the messy, complicated middle — an editor making a bad decision, a writer who didn’t look closely enough, a book that exists in an ethical gray zone that our current tools aren’t remotely equipped to navigate.

But 78 percent is not a verdict. It never was. And until the publishing industry — and the internet — is willing to hold that complexity rather than collapse it into a number, more careers will end this way. Quickly. Cleanly. Without anyone stopping to ask if it was right.